Select name,mark as Physics_Mark from marks When we need to store the data with such key value pairs, we can use Map Data type. The first column is Name field, and second column contains key value pairs of Smith,Maths:87$Physics:37 Here the values can be accessed by providing the column_name. Just like Array we have to specify the separator in STRUCT column using collection items terminated by MAP Select name,gamescore.game_name as game_name,gamescore.score as score from game In a single column we have 2 different types of data which needs to be stored using different Datatypes. You can see that the first column contains the Player name, and next column contains 2 information Game name and Score. Fields in Struct can be accessed using Dot(.) operator. This is a collection of named fields where each field can be of any primitive datatype. Also note that we have to specify the separator in Array column using collection items terminated by. You see that we were able to fetch the first score by passing the index to column name. Now lets create a table and display the first score of each player. The Score column contains values separated by $ Smith,23$45 Lets say we have a file with 2 columns, Name and Score. The elements are maintained in an index, you can retrieve the value like column_name. Just like other programming languages it is a collection of elements of similar data type. These data types are not supported by most of the relation databases. They can store multiple values in a single row/column. They are also know as collection or nested datatypes. Hive supports 3 types of Complex Data Types STRUCT, MAP and ARRAY. Hive supports 2 miscellaneous data types Boolean and Binary. Note that you can convert Timestamp and String to Date. DATE – It specifies the date in YEAR / MONTH /DATE format as YYYY-MM-DD.I f input is of type String, it follows format “YYYY-MM-DD HH:MM:SS.fffffffff” (9 decimal place precision).If input is of type Floating Point ,it is interpreted as UNIX timestamp in seconds with decimal precision.If input is of type Integer ,it is interpreted as UNIX timestamp in seconds.TIMESTAMP – Supports UNIX timestamp with optional nanosecond precision.Hive supports 3 types TIMESTAMP, DATE and INTERVAL. The maximum length of String is debatable but i found a good answer in StackOverflow for this which suggest it could be 2 GB. STRING – Strings are expressed with single quotes ( ‘ ‘ ) or double quotes ( ” ” ).If the string length is greater than the precision, then the value is truncated and displayed. If you define varchar(10) and the input value is of 6 characters then only 6 bytes will be used and no additional space will be blocked. VARCHAR – This works similar to CHAR but instead of fixed length this takes variable length.If you define char(10) and the input value is of 6 characters then the remaining 4 will be filled with spaces. CHAR – Similar to other programming languages this is of fixed length.Hive supports 3 types of String Datatypes CHAR ,VARCHAR ,STRING. The default datatype of DECIMAL is (10,0) when precision and scale are not defined.To specify that its BIGINT, post fix the Integer with L.To specify that its SMALLINT, post fix the Integer with S.To specify that its TINYINT, post fix the Integer with Y.If we want to specify that the number is of different Type then we need to assign Post Fix.When the number is bigger than Integer Range, Hive automatically considers it as BigInt.When we provide a number, Hive by default considers it to be of Integer Data Type.Points to keep in mind for Numeric Datatype: NUMERIC (same as DECIMAL from Hive 3.0).

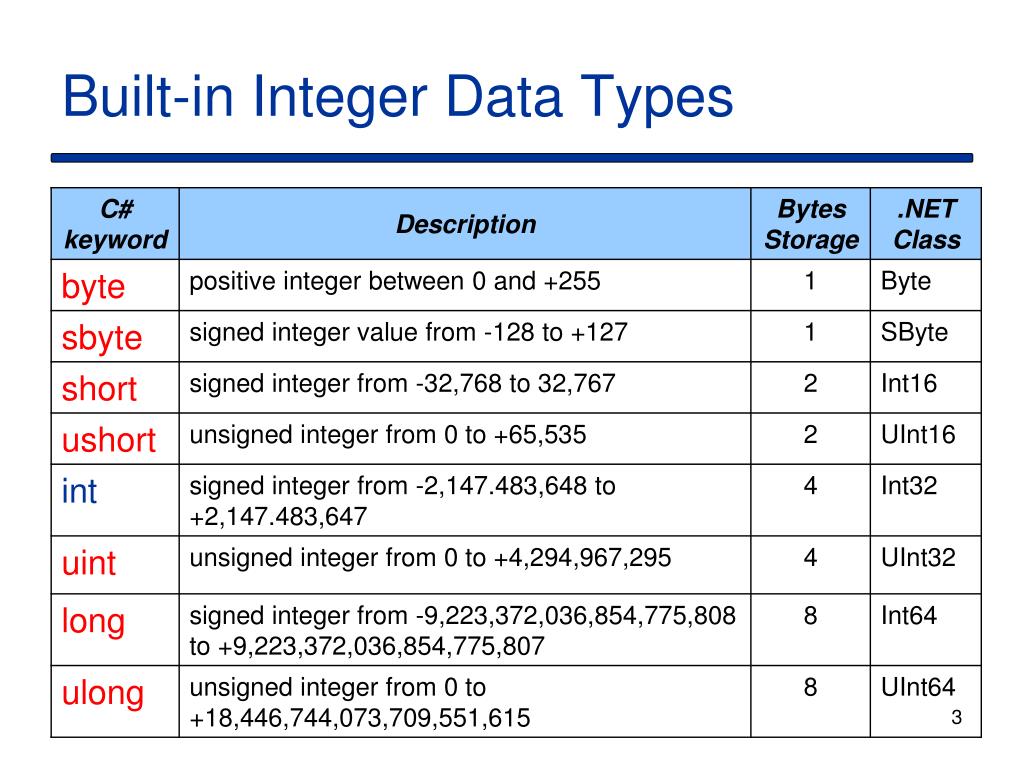

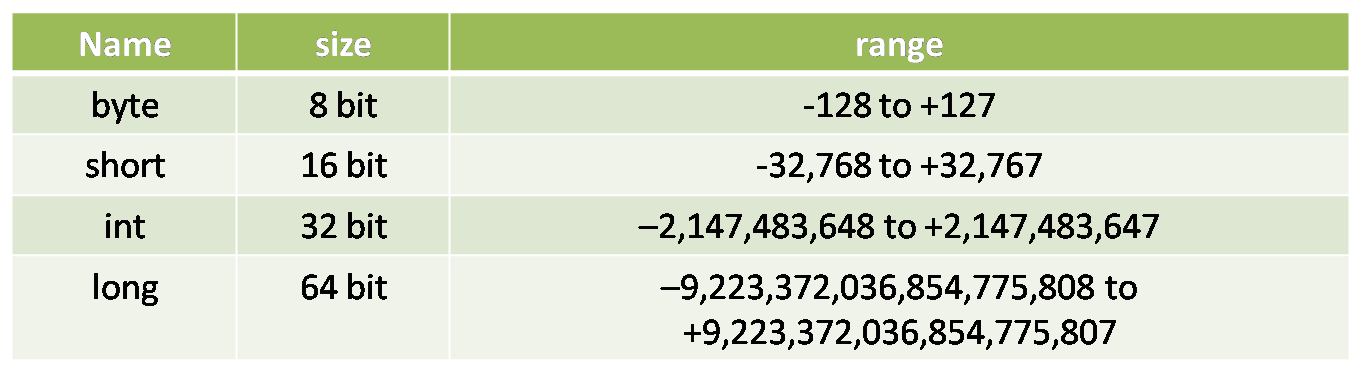

DOUBLE PRECISION (This is an alias of Double).DOUBLE (8-byte double precision floating point number).FLOAT (4-byte single precision floating point number).TINYINT (1-byte signed integer, from -128 to 127).Miscellaneous Data Types Numeric Data Typesĭifferent Numeric Data Types supported in Hive are.The different categories of Primitive Data Types are as follows Spark Escape Double Quotes in Input File.Spark UDF to Check Count of Nulls in each column.Spark Function to check Duplicates in Dataframe.correct column order during insert into Spark Dataframe.How to drop columns in dataframe using Spark scala.Spark Read multiline (multiple line) CSV file with Scala.Hive/Spark – Find External Tables in hive from a List of tables.How to Create Empty Dataframe in Spark Scala.How To Replace Null Values in Spark Dataframe.Spark Dataframe drop rows with NULL values.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed